PDF) The modified Cohen's kappa: Calculating interrater agreement for segmentation and annotation | lu Lu - Academia.edu

![PDF] Guidelines for Reporting Reliability and Agreement Studies (GRRAS) were proposed. | Semantic Scholar PDF] Guidelines for Reporting Reliability and Agreement Studies (GRRAS) were proposed. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/484c886567487dd092a508e8ceceeb2b212f2c2d/6-Table2-1.png)

PDF] Guidelines for Reporting Reliability and Agreement Studies (GRRAS) were proposed. | Semantic Scholar

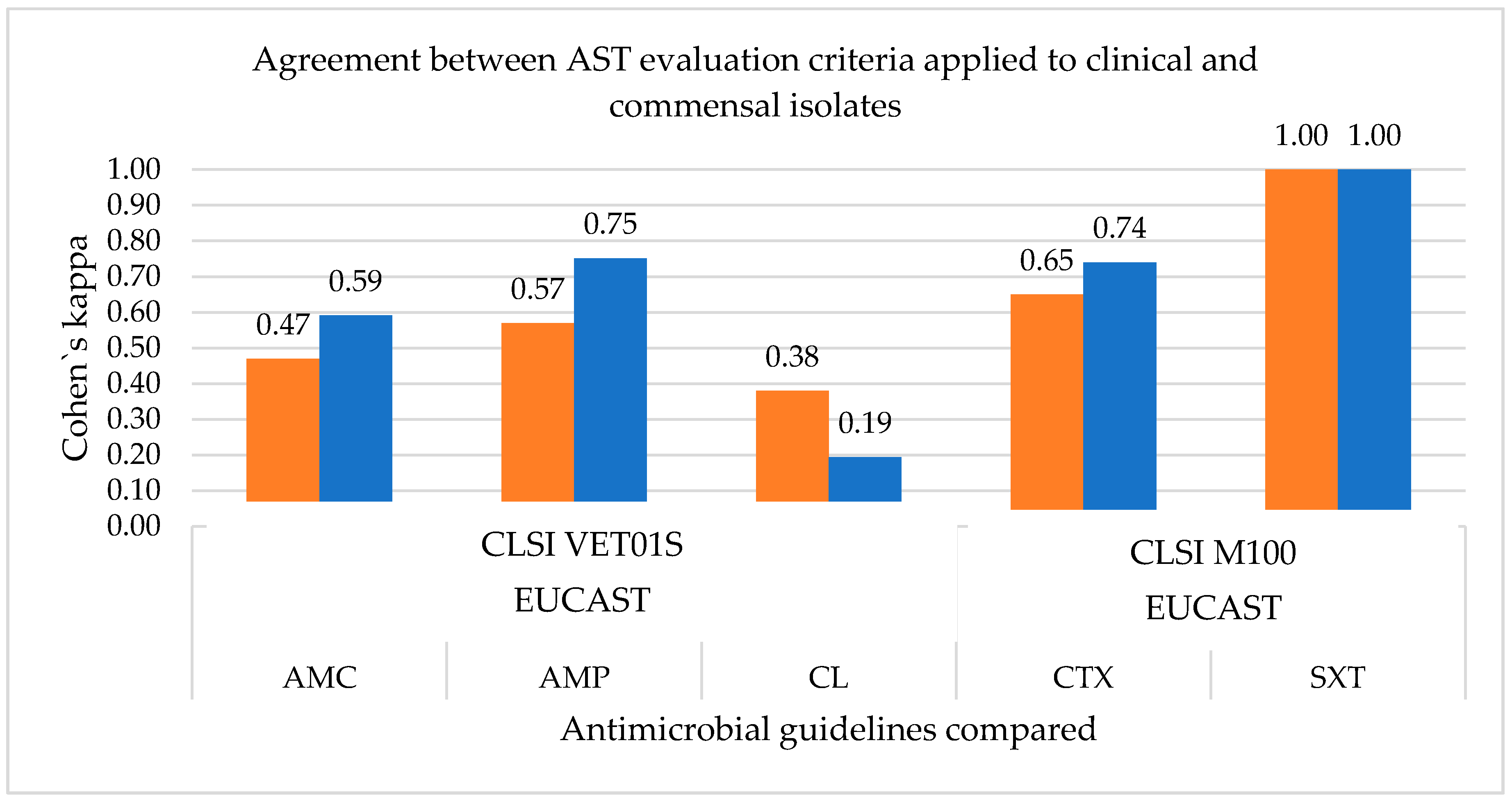

Veterinary Sciences | Free Full-Text | Antimicrobial Resistance of Clinical and Commensal Escherichia coli Canine Isolates: Profile Characterization and Comparison of Antimicrobial Susceptibility Results According to Different Guidelines

Cohen's Kappa, Positive and Negative Agreement percentage between AT... | Download Scientific Diagram

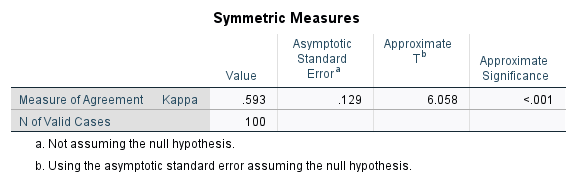

Interpretation of Kappa Values. The kappa statistic is frequently used… | by Yingting Sherry Chen | Towards Data Science

Interrater agreement and interrater reliability: Key concepts, approaches, and applications - ScienceDirect